Google DeepMind launched Gemma 4, a new family of open large language models, designed to bring sophisticated AI capabilities directly to user devices. This release redefines on-device intelligence, enabling autonomous AI agents that perform complex tasks without cloud dependence, according to Google DeepMind.

The models are now available under the Apache 2.0 license, a significant shift that grants developers complete freedom to use and distribute the technology. This move makes Gemma 4 truly open source, unlocking local, multimodal AI on everything from smartphones to Raspberry Pi devices, as reported by ZDNet. Built on the same architectural foundation as Gemini 3, Gemma 4 models are optimized for agentic AI and coding, supporting applications that require low latency and high digital sovereignty.

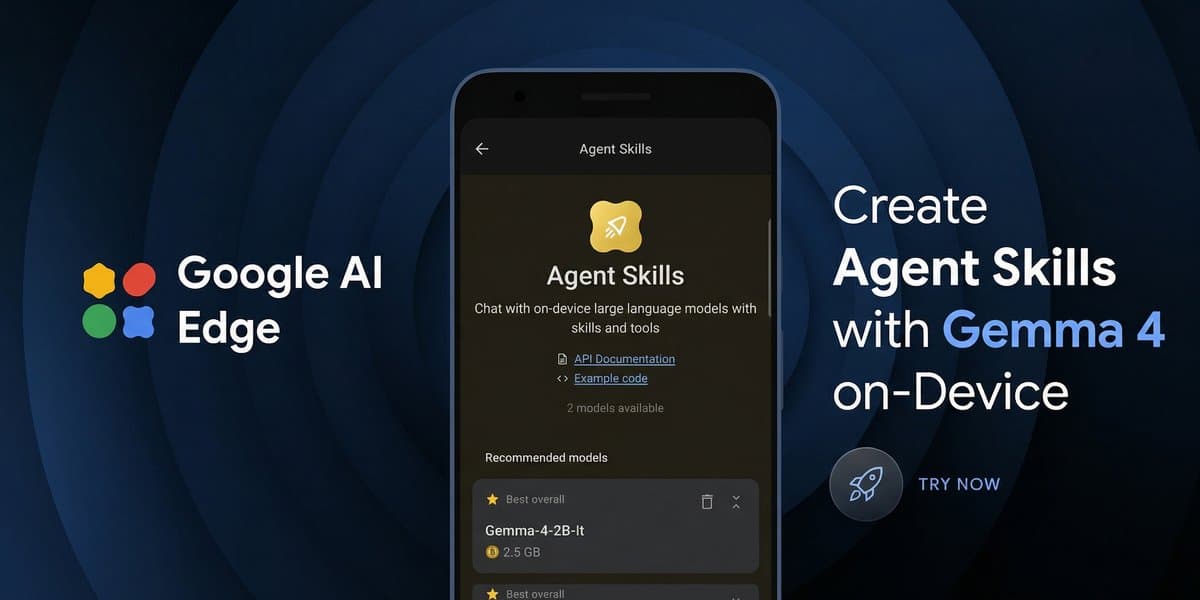

Developers can integrate Gemma 4 through Android's AICore Developer Preview or leverage Google AI Edge to build agentic, in-app experiences across mobile, desktop, and other edge devices. The Google AI Edge Gallery app for iOS and Android features "Agent Skills," showcasing how Gemma 4 enables on-device, multi-step workflows. These skills include augmenting knowledge bases by querying external sources like Wikipedia, producing rich interactive content such as summaries or visualizations, and expanding Gemma 4's core capabilities by integrating with other models for text-to-speech or image generation.

This allows users to manage complex workflows and build their own applications entirely through conversational interactions, creating comprehensive, end-to-end experiences. The ability to integrate with diverse models allows for novel applications, such as pairing photos with mood-matching music.

The system leverages cutting-edge GPU optimizations to process 4,000 input tokens across two distinct skills in under three seconds. On a Raspberry Pi 5, Gemma 4 achieves 133 prefill and 7.6 decode tokens per second using the CPU. For devices with NPU acceleration, like the Qualcomm Dragonwing IQ8, performance boosts to an impressive 3,700 prefill and 31 decode tokens per second.

Gemma 4 is available across mobile (Android, iOS), desktop (Windows, Linux, macOS via Metal, and browser-based WebGPU), and IoT/robotics platforms. NVIDIA RTX GPUs also offer optimized performance for Gemma 4 models. A new Python package and CLI tool, litert-lm CLI, simplify experimentation and power Gemma-based Python pipelines for IoT devices.

More insights on trending topics and technology

Gemma 4 is a new family of open large language models launched by Google DeepMind, designed to bring sophisticated AI capabilities directly to user devices. It enables autonomous AI agents to perform complex tasks without cloud dependence, redefining on-device intelligence.

Gemma 4 is truly open source under the Apache 2.0 license, granting developers complete freedom to use and distribute the technology. It supports advanced agentic AI features like multi-step planning and offline code generation across over 140 languages, facilitating robust, private, and low-latency AI applications.

Gemma 4 utilizes the LiteRT-LM framework, which optimizes model performance with a minimal memory footprint, allowing it to run using less than 1.5GB of memory on some devices. This framework supports 2-bit and 4-bit weights and leverages GPU optimizations, enabling efficient processing on hardware ranging from Raspberry Pi 5 to Qualcomm Dragonwing IQ8.

Gemma 4 is designed for broad compatibility, running on mobile devices (Android, iOS), desktop platforms (Windows, Linux, macOS), and IoT/robotics. It can be integrated through Android's AICore Developer Preview or Google AI Edge, and is optimized for hardware like NVIDIA RTX GPUs.