An essay by entrepreneur Matt Shumer claiming a new wave of AI advancements is poised to radically alter society has gone viral, sparking debate. While the post resonates with those already working with AI, it's being met with skepticism from others who see it as a self-serving alarm call from within the tech industry. The question is: are these new AI developments genuinely different, or are we just experiencing another cycle of AI hype?

The Viral Warning: Are We Really on the Brink?

Shumer's piece, titled "Something Big Is Happening," draws parallels between the current state of AI development and the period immediately preceding the COVID-19 pandemic. He argues that the latest generative AI models are already capable of taking over significant portions of jobs. This claim has resonated with some within the tech industry, with many high-profile accounts sharing Shumer's post.Understanding the Concerns: AGI and the Singularity

The core of Shumer's argument hinges on progress toward AGI and the Singularity. AGI (artificial general intelligence) refers to AI that can perform any intellectual task a human can. The Singularity is the hypothetical point when technological growth becomes uncontrollable and irreversible, resulting in unforeseeable changes to human civilization.Shumer claims that OpenAI's GPT-5.3-Codex coding model helped create itself, and Anthropic has made similar claims. There's no question that generative AI has significantly impacted entry-level coding jobs. The debate is whether this represents a step-change or incremental improvements.

The Skeptics' View: Hype vs. Reality

Skeptics argue that warnings of imminent AGI should be taken with a grain of salt, pointing to existing limitations of AI models. A key concern is the tendency of large language models (LLMs) to "hallucinate," or generate false or misleading information. OpenAI's own documentation states that its latest model, GPT-5.2, has a hallucination rate of 10.9 percent.Even with internet access to verify facts, the hallucination rate remains at 5.8 percent. The use of AI in the legal profession has already led to lawyers being censured for relying on AI-generated information. "Hallucinations" call into question claims that AI can consistently perform complex tasks that require accuracy and reliability.

Examples Under Scrutiny: App Development and Legal Reasoning

Shumer cites AI's ability to create, test, and debug apps as evidence of its advanced capabilities. While impressive, critics point out that the existence of countless apps allows AI to model its work on existing examples. The ability to create apps more quickly, they argue, doesn't necessarily signal a fundamental shift.Similarly, Shumer's claim that AI is akin to having a team of lawyers instantly available is challenged by the reality of AI-related errors in legal settings. The limitations of current AI models raise questions about their reliability in high-stakes situations.

OpenAI's Recent Moves: Signs of Progress or Pressure?

Recent decisions by OpenAI, like introducing ads into ChatGPT and rolling out an "adult" mode, have also drawn criticism. Some interpret these moves as signs that the company is prioritizing revenue generation over pursuing true AGI. These actions suggest the company is under pressure to monetize its technology, which might conflict with long-term AGI goals.What's Next

- Continued advancements in AI model accuracy and reliability.

- Ethical considerations and regulations surrounding AI deployment.

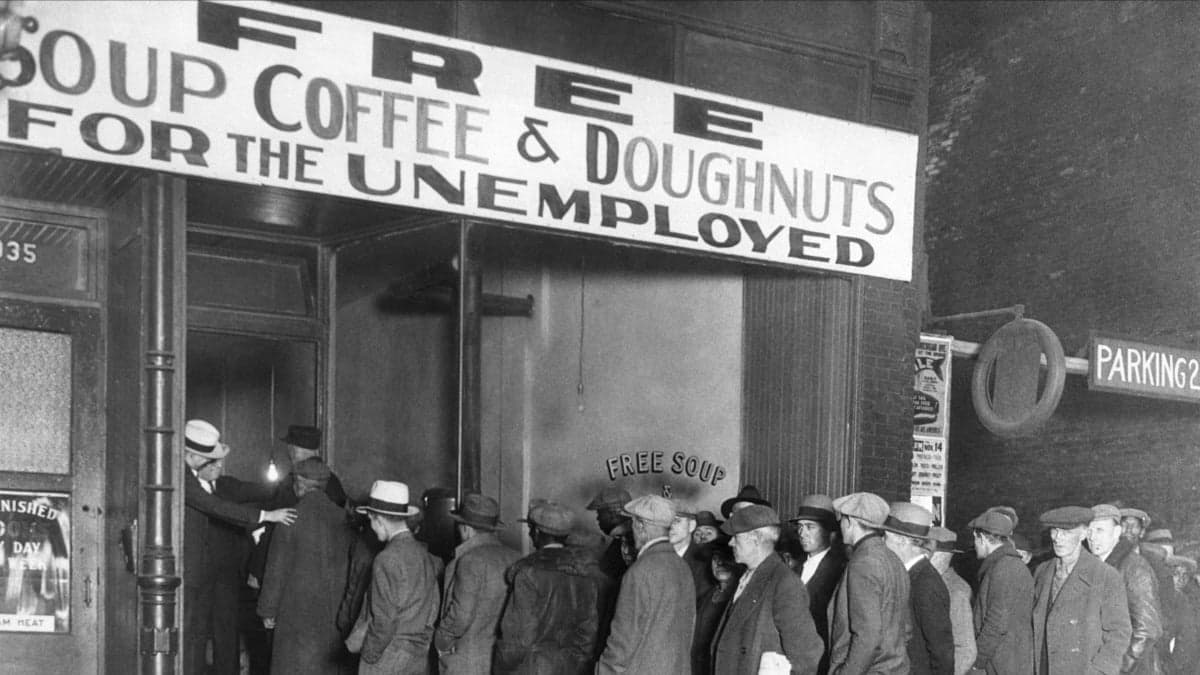

- Further debate about the potential impact of AI on the job market.

Why It Matters

- For users: Navigating the hype around AI to understand its real-world capabilities and limitations.

- For the industry: Balancing the pursuit of innovation with responsible development and transparency.

- For society: Preparing for the potential societal and economic impacts of increasingly advanced AI systems.

- For regulators: Developing appropriate frameworks to guide the development and deployment of AI technologies.

Source: Mashable

Disclosure: This article is for informational purposes only.