Key Points About ART

- ART trains LLM-based agents for complex, real-world tasks.

- It uses reinforcement learning (GRPO) for "on-the-job" agent improvement.

- The framework supports various LLMs like Qwen, GPT-OSS, and Llama.

- W&B Training (Serverless RL) offers managed infrastructure, reducing costs and setup.

- ART integrates with tools like LangGraph, enhancing multi-step reasoning.

ART's Impact: Efficiency and Automation

ART functions like a specialized mentor for AI agents, teaching them to master complex workflows through trial and error, much as a human expert would guide a new hire. Instead of rigid programming, agents learn by performing tasks, receiving feedback, and adapting their strategies over time. This approach is critical for building agents that can navigate unpredictable environments and perform multi-step reasoning, moving beyond simple question-answering to active problem-solving. The system significantly streamlines the entire development process.

ART’s architecture simplifies reinforcement learning (RL) integration into any Python application. It separates the training logic (server) from the agent's interaction (client), allowing developers to focus on defining data, environment, and reward functions. This client-server split means an agent can be trained from a local machine, with the server handling GPU-enabled environments and abstracting away the complexities of inference and training loops, according to OpenPipe's GitHub repository. The framework supports a wide range of vLLM/HuggingFace-transformers compatible causal language models.

Accelerating Agent Development

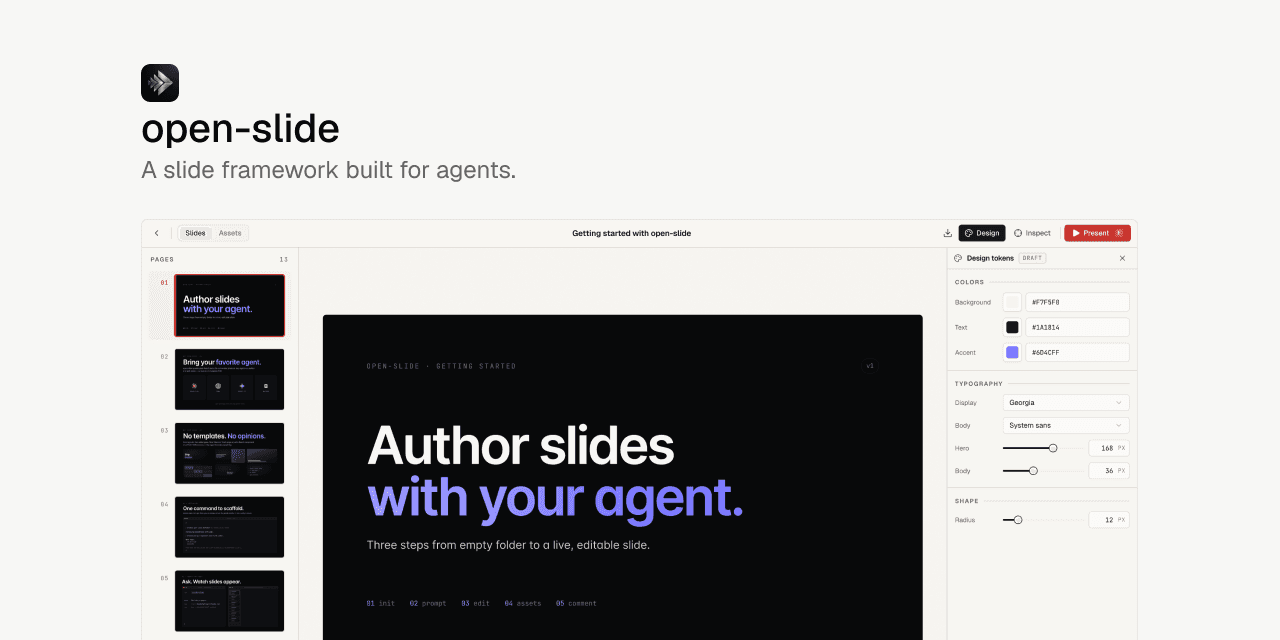

The integration with W&B Training (Serverless RL) marks a significant leap, offering the first publicly available service for flexible reinforcement learning model training. This fully managed infrastructure handles the complex GPU setup and scaling, allowing developers to iterate quickly. Developers experience 40% lower costs due to multiplexing on shared inference clusters and 28% faster training, scaling to over 2000 concurrent requests across multiple GPUs. Every trained checkpoint becomes instantly available via W&B Inference, accelerating feedback cycles from hours to minutes.This efficiency is crucial as agentic AI platforms gain traction. Similar to how OpenClaw has been likened to Linux for agentic AI, tools like ART are democratizing the creation of sophisticated AI agents. Retailers are already deploying AI to transform supply chains, moving from forecasting to real-time operations, with examples from Walmart, Amazon, and Albertsons improving flows by 15%, according to Let's Data Science. This growing demand for robust, adaptable agents underscores ART's value.

The Need for Robust Agent Training

The rise of powerful AI agents also highlights new challenges, particularly in security. Recent supply chain attacks, such as those targeting the Trivy vulnerability scanner, demonstrate how critical secure practices are for any new development paradigm. ART’s focus on reliable, experience-based learning helps create more resilient agents that can better handle real-world complexities and reduce vulnerabilities that arise from rigid, brittle programming. Just as Adobe Firefly Custom Models allow creators to train AI image generators on specific assets for consistent aesthetics, ART empowers developers to fine-tune agent behavior for precise, consistent performance in critical tasks.ART provides convenient wrappers to introduce RL training into existing applications, integrating with platforms like W&B, Langfuse, and OpenPipe for flexible observability and simplified debugging. The platform offers intelligent defaults, optimized for training efficiency and stability, while allowing for custom configuration of training parameters and inference engine settings. This blend of ease-of-use and customizability ensures that ART can meet diverse project needs as the agentic AI landscape continues to evolve.