Why Anthropic Is Challenging the Pentagon

The legal battle stems from Anthropic CEO Dario Amodei's assertion that his company's AI models should not be deployed for mass surveillance of Americans or direct autonomous weapon systems. This stance led to swift condemnation from Defense Secretary Pete Hegseth and former President Donald Trump, who announced the "supply chain risk" label "effective immediately," a measure usually applied to companies from adversarial nations like China, according to The New York Times. This unprecedented move has sent shockwaves through Silicon Valley.Anthropic's lawsuit, a 48-page document filed in a California federal court, argues that White House officials acted unconstitutionally and out of retaliation. "The Constitution does not allow the government to wield its enormous power to punish a company for its protected speech," the lawsuit states, asserting that Anthropic "turns to the judiciary as a last resort to vindicate its rights and halt the Executive’s unlawful campaign of retaliation." The company also challenges the statutory authority underpinning the Pentagon’s designation, 10 U.S.C. 3252, arguing the department must use the least restrictive means to mitigate supply chain risk, not punish a supplier, per Axios.

The "supply chain risk" designation is a severe measure that could effectively cut off Anthropic from lucrative U.S. government contracts, potentially costing the company hundreds of millions of dollars. While Amodei initially issued an apology for his public resistance, the company’s decision to sue indicates a firm commitment to its ethical guidelines regarding AI deployment.

Industry Implications and Legal Outlook

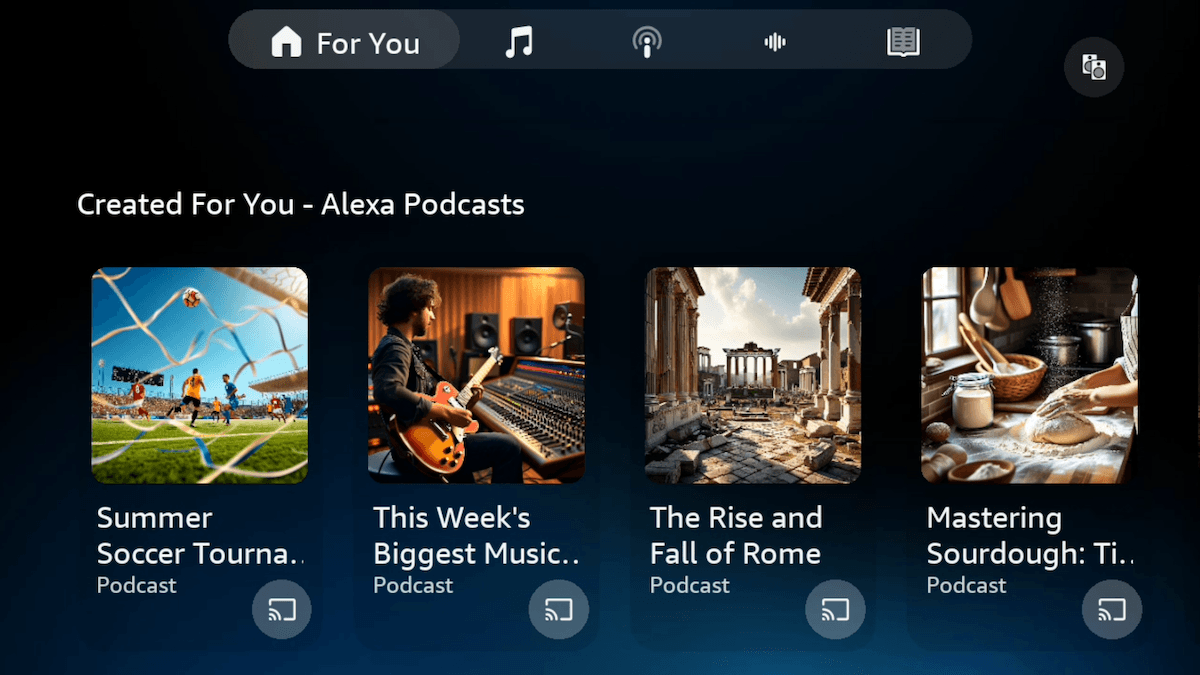

The Pentagon’s decision has ignited a broader debate about the government’s authority to dictate the terms of AI development and use, especially concerning national security applications. The unique nature of the "supply chain risk" label being applied to a U.S. company for what it views as protected speech highlights the escalating tensions between tech ethics and governmental demands. OpenAI CEO Sam Altman, a rival to Amodei, also criticized the Trump administration's overreach in blacklisting Anthropic's technology, signaling widespread concern within the tech community.Experts, however, suggest Anthropic faces a difficult legal battle. Brett Johnson, a partner at Snell & Winter, told Wired that "it's 100 percent in the government’s prerogative to set the parameters of a contract," implying limited avenues for appeal. Anthropic's strategy may involve arguing that it was unfairly singled out among other U.S. government AI contractors. Despite the official designation, Anthropic's Claude chatbot continues to be reportedly used in some U.S. military operations, raising questions about the practicality and consistency of the Pentagon's ban. Meanwhile, other government agencies are expected to follow the presidential directive and cease using Claude, although Microsoft has stated it will continue offering the chatbot to non-DoD agencies.