Google DeepMind has released Gemini Robotics-ER 1.6, an upgraded model designed to provide robots with a more sophisticated understanding of the physical world. This iteration aims to bridge the gap between abstract reasoning and real-world execution by enhancing robots' spatial logic and multi-view processing.

The model is now available to developers via the Gemini API and Google AI Studio. The challenge for physical robots has always been their limited ability to interpret complex environments like humans do. Previous generations of AI models struggled with tasks requiring nuanced spatial awareness, planning, and real-time adjustments. Gemini Robotics-ER 1.6 addresses this by specializing in capabilities such as visual and spatial understanding, alongside improved task planning and success detection, according to Google DeepMind.

How Robots Understand Their Surroundings

Gemini Robotics-ER 1.6 refines a "reasoning-first" approach, enabling robots to process visual data with greater precision.This includes advancements in spatial logic and multi-view understanding, which allow a robot to build a comprehensive internal map of its surroundings from various perspectives.

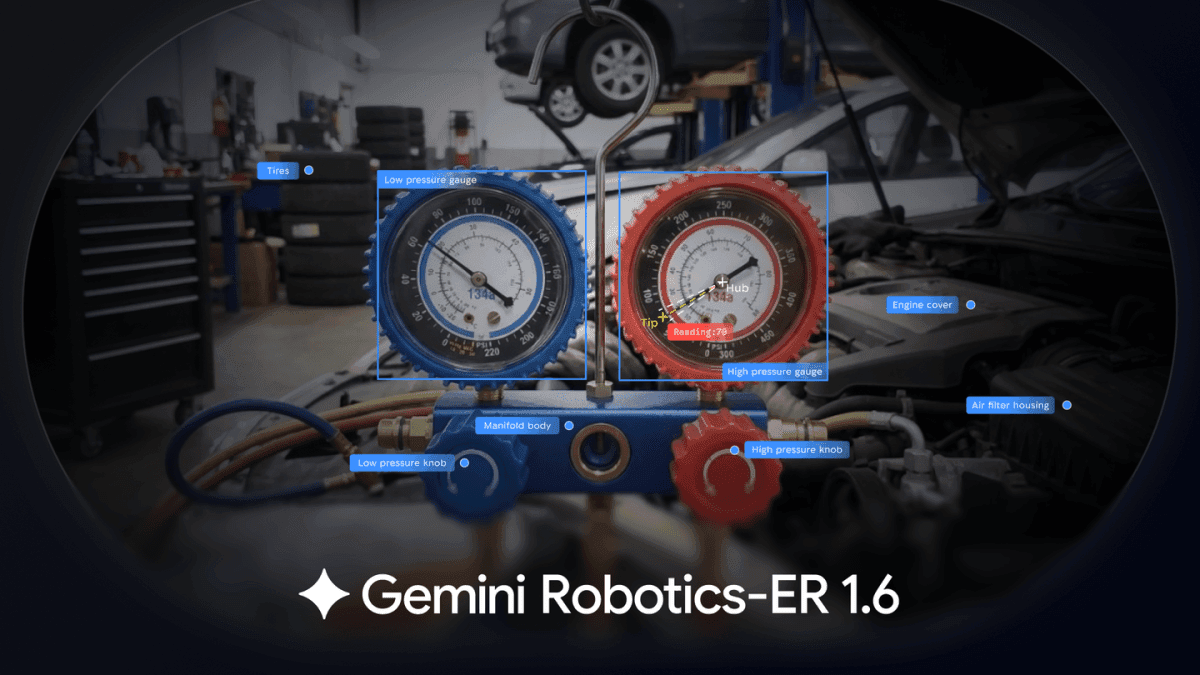

Essentially, the model helps robots discern objects' positions, orientations, and relationships within a dynamic space, improving navigation and manipulation. A notable new feature is "instrument reading," a capability developed in collaboration with Boston Dynamics.

This allows robots to accurately interpret complex gauges and sight glasses, crucial for industrial and inspection tasks. The model also sets a new safety benchmark, demonstrating superior compliance with safety protocols, even when faced with adversarial spatial reasoning scenarios.

Marco da Silva, Vice President and General Manager of Spot at Boston Dynamics, stated that "Advances like Gemini Robotics ER 1.6 mark an important step toward robots that can better understand and operate in the physical world." He highlighted the measured approach of rolling out new DeepMind capabilities through beta programs to a smaller group of customers, ensuring features meet expectations before wider release, per IEEE Spectrum. This careful deployment ensures that models like Gemini Robotics-ER 1.6 achieve practical utility in the field.

The Broader Landscape of Embodied AI

DeepMind's advancements occur within a rapidly evolving field of embodied artificial intelligence, where several players are pushing similar boundaries. Companies like AGIBOT are developing foundation models such as GO-2, designed to bridge high-level reasoning with reliable execution in real-world settings. AGIBOT observed that traditional vision-language-action (VLA) models often disconnect reasoning signals from physical motor commands, leading to unreliable performance. Their approach combines action reasoning, hierarchical execution, and long-term memory to create a complete intelligent loop.

Similarly, Generalist AI introduced its Gen-1 model, aiming to instill "physical common sense" in robots. This model is engineered to serve as the brain for various robotic platforms, from humanoids to industrial arms. The collective efforts underscore an industry-wide focus on equipping robots with the intuitive understanding needed to operate effectively and safely in unstructured environments.

The move by Google DeepMind to make Gemini Robotics-ER 1.6 available to developers signals a push towards accelerating broader adoption and application of these sophisticated capabilities.