Higgsfield now allows users to generate cinematic images and videos directly within AI agents like Claude, transforming conversational AI into a complete creative studio. This integration, powered by Higgsfield's Model Context Protocol (MCP), enables AI agents to produce high-quality visual content, train characters, and manage assets without leaving the conversation interface, as of late May 2024. This development simplifies complex creative workflows for marketers, e-commerce businesses, and content creators.

How Does Higgsfield Transform AI Agent Capabilities?

Higgsfield’s MCP connection turns AI agents such as Claude, OpenClaw, Hermes Agent, and NemoClaw into sophisticated media generation platforms. Users simply add the Higgsfield MCP server URL to their agent's settings and authenticate with their Higgsfield account. This setup allows agents to access over 30 AI models, including Soul, Nano Banana for images, and Kling or Veo for video, automatically selecting the best tool for a given task.This integration offers a spectrum of creative possibilities, from generating 4K resolution images to cinematic videos up to 15 seconds long. Users can dictate specific aspect ratios and durations, and even maintain character consistency across frames using Soul Characters. This streamlines production workflows, letting users go from concept to polished content within a single chat session.

What Creative Workflows Does This Enable?

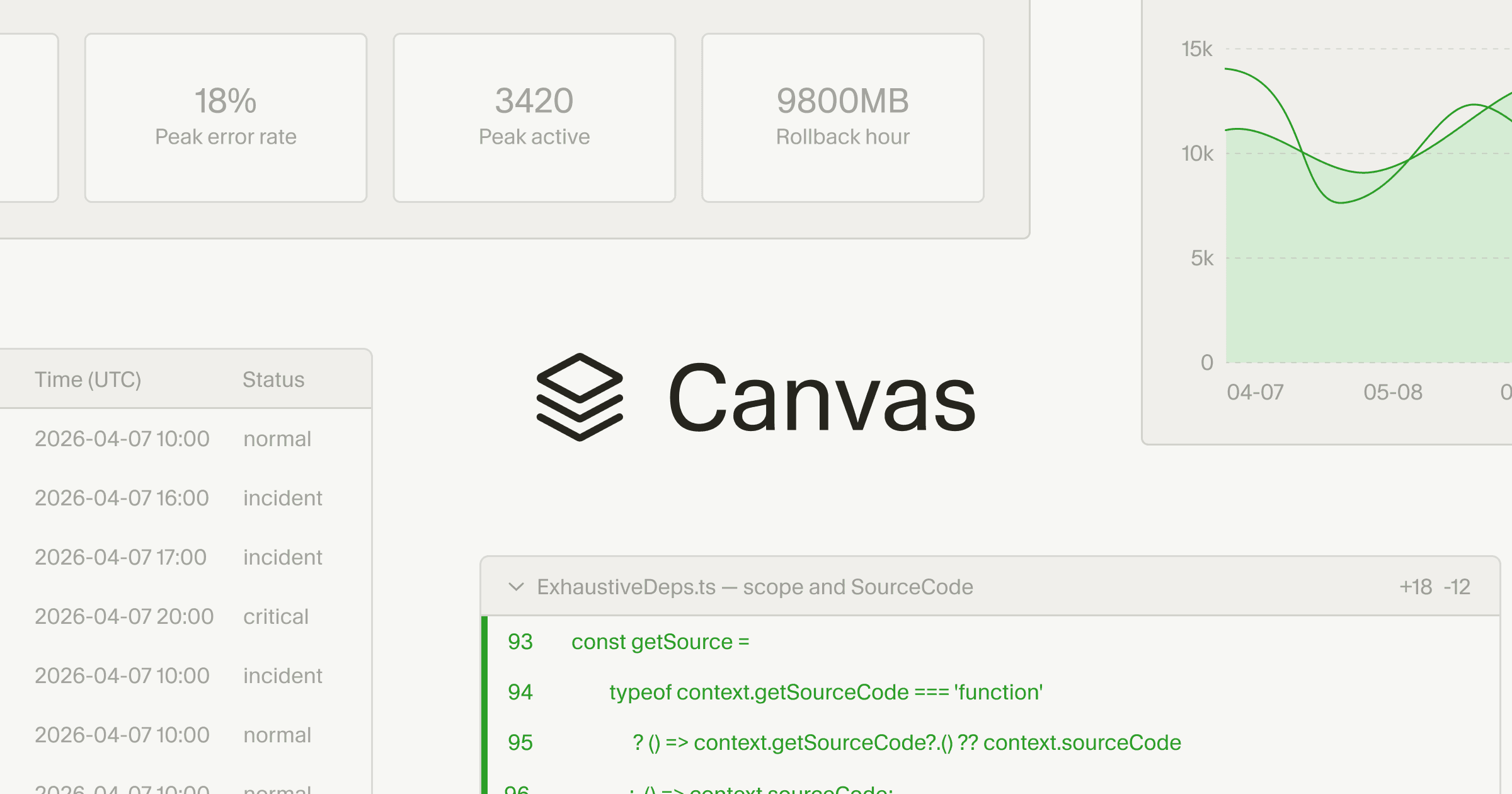

The platform is designed to replace several traditional creative roles, potentially saving businesses significant costs. For e-commerce, it can generate lifestyle product shots and promotional videos, eliminating the need for a physical photo studio. Social media managers can produce scroll-stopping images and short-form videos for platforms like Instagram and TikTok, moving from idea to post in moments.Marketing agencies can scale campaign visuals, generating dozens of variations across styles and models in minutes to deliver client-ready assets. The system also supports filmmaking, helping previsualize shots, create concept art, and produce cinematic clips. Even infographics and visual data can be enhanced, turning abstract numbers into compelling illustrations and icons. The platform’s "ad engine" feature can find top-spending niches, generate various video formats like UGC or TV spots, write outreach, and deliver weekly reports, aiming to replace a "$5K/month retainer," according to Higgsfield. This level of automation aligns with the broader trend of AI agents taking on more complex tasks. While AI agents are rapidly emerging, some can scan for vulnerabilities faster than human teams, necessitating careful implementation for sensitive actions, as reported by CyberScoop. Higgsfield addresses this by providing a structured, secure environment for creative output.

What Does This Mean for Content Creation?

The integration empowers users to build comprehensive visual systems from a single conversation. Users can train a Soul Character from existing photos, then generate a 10-image lookbook across various scenes and styles. This capability extends to comparing multiple AI models side-by-side, running the same prompt through options like Flux, Cinema Studio, and Seedream to determine the best output before iterating further.This rapid iteration and comparison capability allows for unparalleled experimentation and efficiency. Images typically complete in seconds, while videos take longer but run asynchronously, ensuring quick results. Furthermore, the system allows users to leverage past generations as input, fostering an iterative workflow crucial for refining creative projects. This mirrors advancements in AI video generation seen globally, such as China's Kuaishou’s Kling tool, which significantly advances what’s possible in AI-generated video, per The Wall Street Journal. The ability for AI agents to directly manage and execute these creative tasks marks a significant shift, providing a powerful, integrated solution for content production at scale.