Local Deep Research is an open-source AI research assistant that runs on a user's own machine, offering deep, agentic research capabilities with a focus on privacy. The tool automates complex queries by searching across more than 10 sources, including the web, academic databases, and private documents, achieving approximately 95% accuracy on the SimpleQA benchmark according to its documentation as of Q2 2024.

The project from developer LearningCircuit allows users to run analysis locally for privacy, connect to any Large Language Model (LLM), and build a personal, searchable knowledge base. It functions as a private intelligence analyst, synthesizing findings into a cited report without user data ever leaving their device.

How Does It Automate Research?

Local Deep Research employs an "agentic" approach to finding answers. Instead of a simple search, it uses an autonomous agent powered by frameworks like LangGraph. This AI agent can decide which search tools to use—switching between academic engines like arXiv and PubMed, or general web search—based on the query's context.

The process involves several steps:

- Automated Search: The tool queries multiple sources simultaneously.

- Source Aggregation: It downloads relevant documents, articles, and papers directly into an encrypted local library.

- Synthesis and Citation: The LLM synthesizes the information into a coherent report, complete with proper citations.

This system allows the user's knowledge to compound. Each research session adds to a private, indexed library that the tool can search in future queries, blending web results with insights from a user's own curated document collection.

Is Your Data Actually Secure?

The core design of Local Deep Research prioritizes user privacy and data ownership. The entire system can run fully offline when paired with local LLMs via Ollama and a self-hosted search engine like SearXNG. According to the project's GitHub page, it contains no telemetry, analytics, or tracking of any kind.

Security is managed at the database level. Each user's data is stored in an isolated SQLCipher database, which is encrypted with AES-256, a security standard used by applications like Signal. This zero-knowledge architecture means that even an administrator with server access cannot decrypt or read the research data. While API keys and other credentials exist in memory during an active session—a standard practice for most applications—the project mitigates risk with session-scoped credential lifetimes.

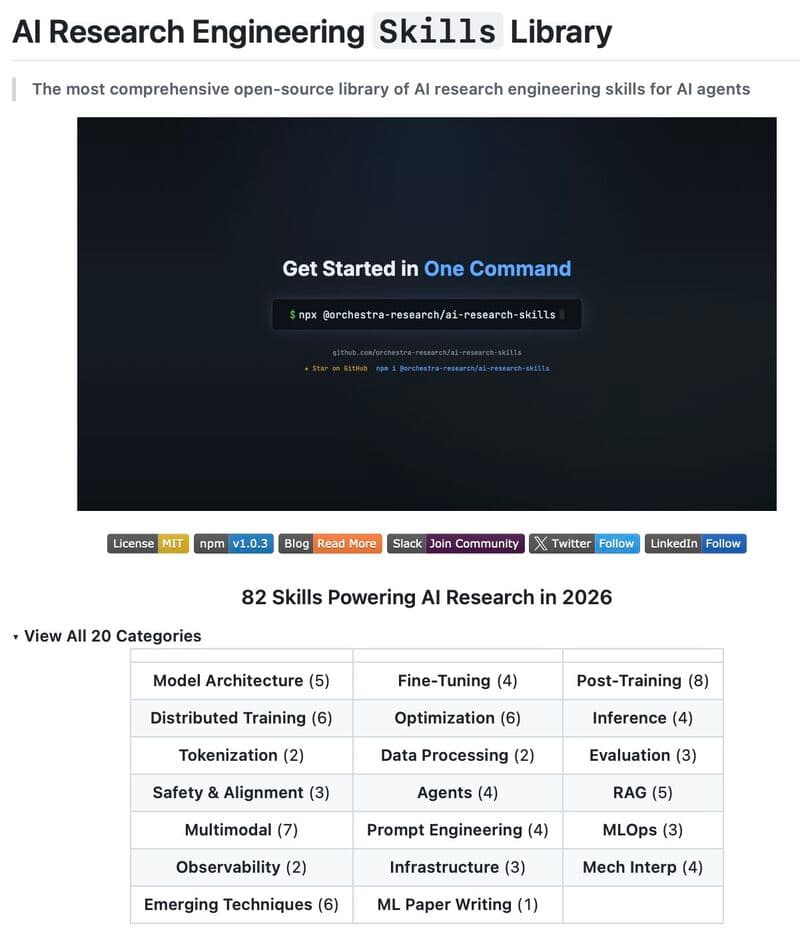

How Can Developers Use This Tool?

Local Deep Research is built for extensibility, offering several ways for developers to integrate it into their workflows. It provides a REST API for programmatic access, a Python API for simple scripting, and command-line tools for benchmarking and management.

A key feature is its integration with the broader AI ecosystem. It can connect to any LangChain-compatible vector store, such as Pinecone or Chroma, allowing the tool to search existing company knowledge bases. It also provides a Model Context Protocol (MCP) server, enabling AI assistants like Claude to use Local Deep Research as a specialized tool for complex queries. To help users select the best model for their needs, the project points to a community-maintained benchmark dataset on Hugging Face that tracks the performance of various local and cloud LLMs.

The Trending Society Take

Tools like Local Deep Research mark a significant shift in the AI landscape. While massive, cloud-based models from major tech companies have dominated headlines, a powerful counter-movement toward local, private, and user-controlled AI is gaining momentum. This project demonstrates that sophisticated, agentic capabilities are no longer the exclusive domain of large enterprises. By decoupling advanced research AI from centralized data collection, Local Deep Research empowers individual developers, journalists, and researchers to leverage cutting-edge technology without compromising privacy, paving the way for a new class of secure, personalized AI applications.