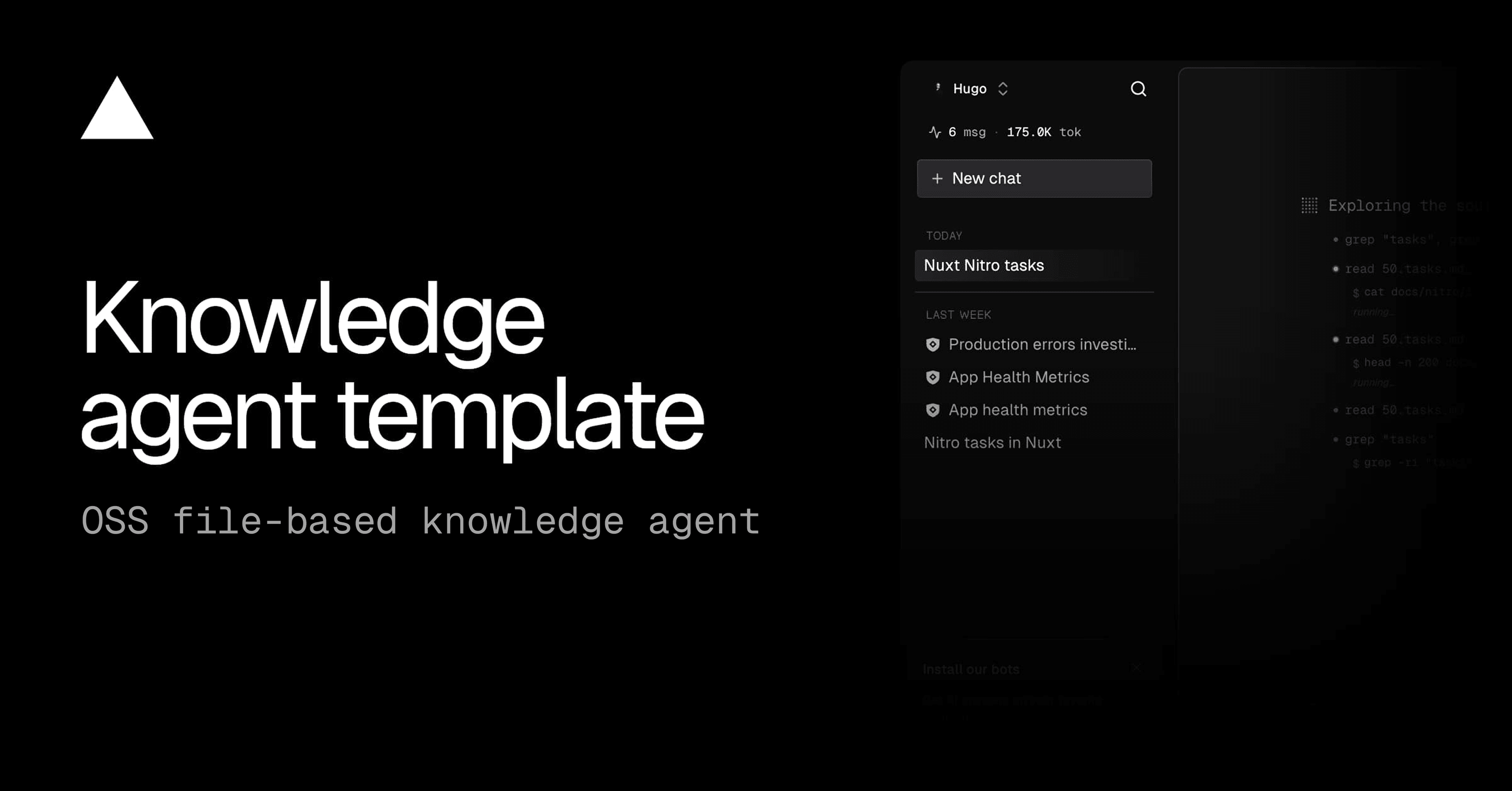

Datalab's Chandra OCR 2 model is revolutionizing how organizations convert complex images and PDFs into structured digital formats, boasting significant improvements in handling math, tables, layout, and multilingual content. Released in March 2026, this state-of-the-art model allows developers to accurately extract information from documents, transforming them into HTML, Markdown, or JSON while meticulously preserving their original layout, according to Datalab's GitHub repository. This leap forward promises to accelerate data processing workflows for builders across finance, legal, and academic sectors.

Imagine a financial analyst needing to ingest hundreds of scanned annual reports, each filled with intricate tables, legal disclaimers, and handwritten annotations. Traditionally, this process involves painstaking manual data entry or error-prone OCR solutions that struggle with non-standard layouts. Chandra OCR 2 acts as a digital clerk, capable of parsing these complex documents with high fidelity, converting them into immediately usable structured data. This capability means a dramatic reduction in manual effort and a significant boost in data accessibility.

The model reconstructs forms accurately, including checkboxes, and performs strongly with math equations and complex multi-column layouts. It also extracts images and diagrams, providing captions and structured metadata alongside the main text. This comprehensive approach ensures that the digital output retains not just the text, but the full contextual and visual information of the original document.

Chandra OCR 2 supports a robust suite of 90+ languages, a critical feature for global operations. This multilingual capability was a primary focus for its latest iteration, as evidenced by Datalab's creation of custom benchmarks to test tables, math, ordering, layout, and text accuracy across diverse linguistic inputs. The model consistently outperforms competitors like olmOCR, dots.ocr, and even large language models such as GPT-4o and Gemini Flash 2 on various benchmarks, with an average of 72.7% accuracy across 90 languages compared to Gemini 2.5 Flash at 60.8%.

This flexibility allows developers to integrate Chandra into diverse workflows, from real-time document processing to large-scale data migration. The model's ability to handle handwritten content and complex forms makes it invaluable for sectors like healthcare and legal, where such documents are prevalent. In an era where AI models are increasingly trained on user interaction data and code snippets, as seen with tools like GitHub Copilot, the need for precise and privacy-conscious document processing becomes even more pronounced. Chandra provides a powerful open-source foundation for developers building these data-driven applications.

The Chandra project is open-source under Apache 2.0, with model weights using a modified OpenRAIL-M license. This allows free use for research, personal projects, and startups under $2M in funding or revenue, with broader commercial licensing available through Datalab. This tiered licensing model encourages adoption while protecting the innovation behind the Datalab API.

Chandra OCR 2 is a document intelligence model by Datalab that converts complex images and PDFs into structured digital formats like HTML, Markdown, or JSON. It accurately extracts information from documents while preserving their original layout, including tables, math, and multilingual content, making it easier to process and analyze data.

Chandra OCR 2 boasts several key features, including converting images and PDFs to structured formats, preserving complex layouts, supporting over 90 languages, excelling at handwriting recognition and form reconstruction, and extracting images, diagrams, and structured data. It also offers both local (HuggingFace) and remote (vLLM) inference modes for flexible deployment.

Chandra OCR 2 outperforms competitors like olmOCR, dots.ocr, GPT-4o, and Gemini Flash 2 on various benchmarks. It achieves an average of 72.7% accuracy across 90 languages, compared to Gemini 2.5 Flash's 60.8% accuracy, demonstrating its superior ability to handle diverse linguistic inputs and complex document structures.

Chandra OCR 2 offers two inference modes: local (HuggingFace) and remote (vLLM). The vLLM server, deployable via Docker, can process approximately 2 pages per second on a single NVIDIA H100 GPU, making it suitable for high-throughput, production-grade batch processing.

Industries that handle large volumes of complex documents, such as finance, legal, healthcare, and academia, can significantly benefit from Chandra OCR 2. Its ability to accurately extract data from various document types, including those with handwritten content and complex layouts, makes it invaluable for digitizing archives, automating data entry, and gaining deeper insights from unstructured information.

More insights on trending topics and technology