OpenAI has made its latest compact large language models, GPT-5.4 Mini and GPT-5.4 Nano, available on the Vercel AI Gateway, providing developers with powerful, cost-effective options for complex agentic workflows. These models deliver state-of-the-art performance for their size class in coding and general computer use, specifically optimized for sub-agent architectures where multiple smaller models collaborate on larger tasks, according to Vercel's changelog. This move simplifies integration and offers enhanced control over model responses.

Meanwhile, GPT-5.4 Nano offers performance remarkably close to the Mini tier but at a significantly lower price point. This makes it ideal for high-volume applications, particularly sub-agent workflows where costs can quickly escalate with parallel calls. Both models introduce new parameters for verbosity and reasoning level, giving developers granular control over the detail in a response and how much "thought" the model applies before generating an answer.

This focus on smaller, specialized models comes as the industry increasingly recognizes the need for efficient, on-device AI. For example, the Humane AI Pin, initially a cloud-dependent wearable, pivoted its underlying CosmOS operating system to power an on-device laptop chatbot after HP acquired the company for a reported $116 million. HP's forthcoming laptop will integrate a GPT OSS 20b AI model, primarily targeting PC owners who need AI for work purposes. This trajectory underscores a broader trend: highly capable, smaller models are becoming essential for practical, scalable AI deployments outside the data center.

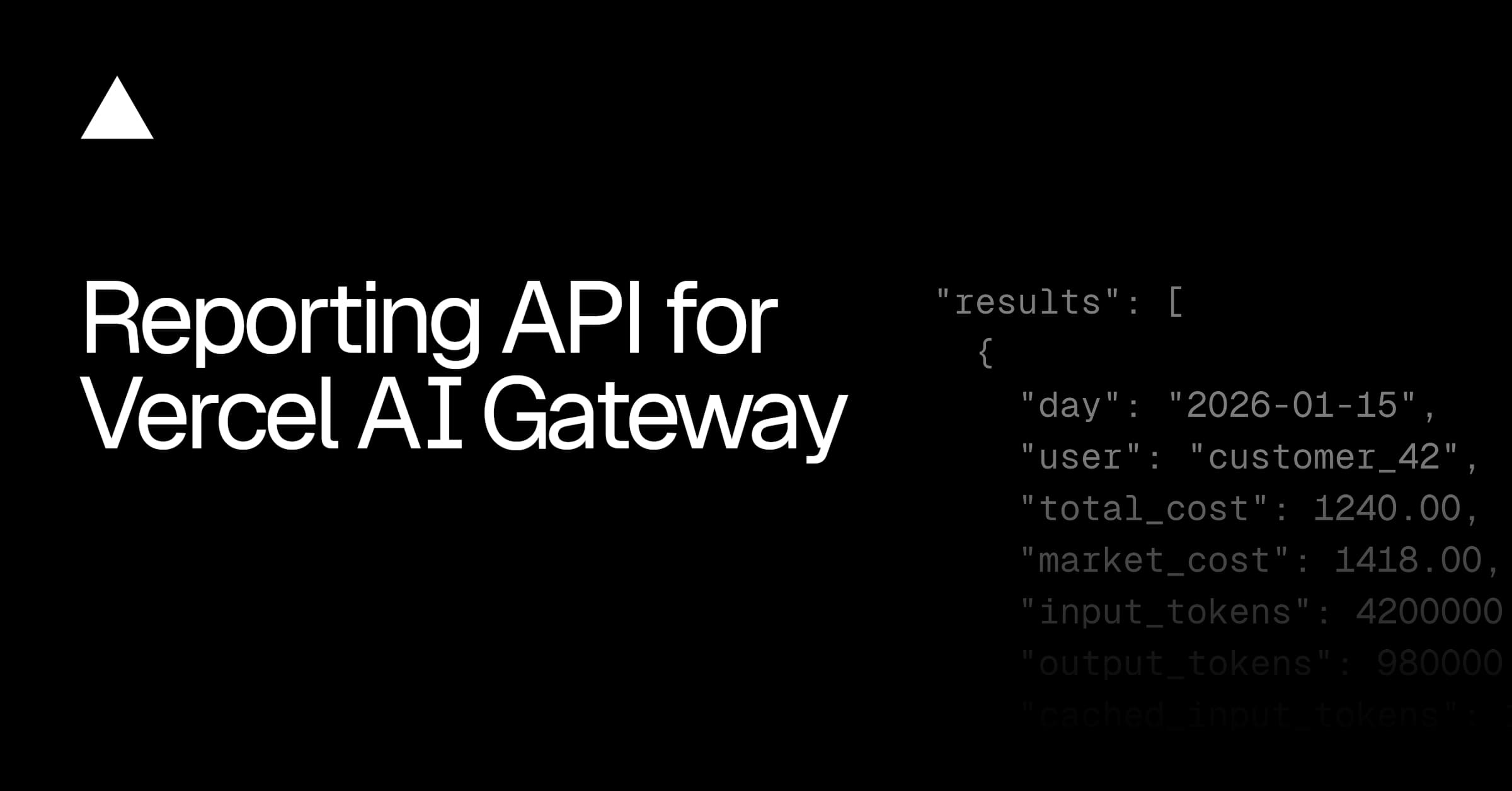

Beyond basic management, the platform configures intelligent retries, failover mechanisms, and performance optimizations. This translates to higher uptime compared to directly integrating with individual provider APIs, a critical advantage for production environments. Features like built-in observability, Bring Your Own Key (BYOK) support, and automatic provider routing further streamline the development workflow.

The push for accessible AI tools is also evident in platforms like ChatGenius. This Las Vegas startup recently launched a 43-feature platform built on OpenAI's GPT-5, automating customer conversations across social media platforms like Instagram and Facebook Messenger. ChatGenius supports 13 languages and serves businesses across 5 industries, showcasing the broad applicability and demand for integrated AI solutions. Such platforms exemplify how readily available AI models are being packaged into powerful, specific-use applications for enterprise clients.For Developers Building Agents

Integrate GPT-5.4 Mini or Nano using the AI SDK to build more efficient and cost-effective sub-agent workflows. The new verbosity and reasoning parameters offer granular control previously unavailable in this class of models.

For Startups and Founders

Leverage the Vercel AI Gateway for simplified model integration, unified cost tracking, and enhanced reliability. This reduces development overhead and provides better uptime, crucial for nascent AI applications targeting high-volume use cases.

For Enterprise AI Initiatives

Consider how these compact, powerful models can enable more efficient on-device or edge AI applications, mirroring the shift seen with the Humane AI Pin's CosmOS for HP. This allows for specialized, high-performance tasks with potentially lower latency and privacy benefits.

GPT-5.4 Mini and Nano are OpenAI's latest compact large language models, now available on the Vercel AI Gateway. They are designed for efficient performance in coding and general computer use, especially in sub-agent architectures where multiple smaller models collaborate on larger tasks. GPT-5.4 Nano offers performance remarkably close to the Mini tier but at a significantly lower price point.

GPT-5.4 Mini and Nano enhance AI agent workflows by providing a balance between capability and cost, making them suitable for complex tasks like autonomous agents. GPT-5.4 Mini excels at code generation and tool orchestration, while GPT-5.4 Nano is ideal for high-volume applications. Both models also offer new parameters for controlling verbosity and reasoning levels.

The Vercel AI Gateway simplifies the integration and management of large language models by providing a unified API endpoint. It offers features like usage tracking, cost management, intelligent retries, and failover mechanisms, which leads to higher uptime compared to direct integration with individual provider APIs. The AI Gateway also includes built-in observability.

The industry is recognizing the need for efficient, on-device AI, driving a shift towards smaller, specialized models. These models are becoming essential for practical, scalable AI deployments outside the data center, as demonstrated by companies like HP integrating GPT OSS 20b AI model into laptops.

Vercel AI Gateway offers a unified API, cost tracking, and enhanced uptime features. It simplifies AI integration by abstracting away the complexities of managing various large language models. The platform also configures intelligent retries, failover mechanisms, and performance optimizations.

More insights on trending topics and technology