AI training data giant Mercor , a company valued at $10 billion , faces escalating scrutiny for reportedly soliciting individuals to sell their past work materials, a practice fraught with intellectual property and confidentiality concerns. This controversial strategy unfolds against the backdrop of a recent, significant data breach that exposed sensitive contractor and client information, raising alarms about data security within the booming AI training sector. The incident spotlights the complex ethical and legal landscape companies navigate as they aggressively acquire diverse datasets to fuel advanced AI models.

Mercor's Controversial Data Acquisition Strategy

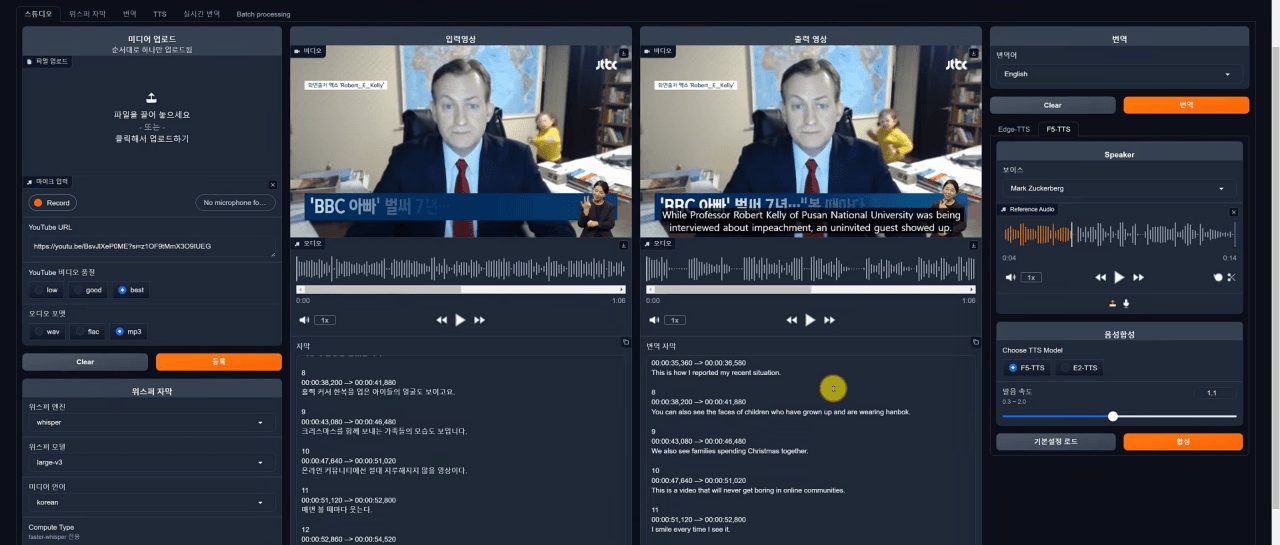

Mercor carved out its niche by hiring domain experts to train AI models, but its latest approach to data acquisition stirs significant debate. The company has reportedly approached professionals across various industries, including entertainment, offering payment for work completed at previous jobs, according to The Wall Street Journal . Visual effects artists, for instance, reported Mercor requested "4D physics scenes with camera data, depth and motion/point tracking" – highly specific materials crucial for advanced AI training. This strategy immediately raises red flags. Employers typically own the intellectual property generated by their staff, and many professionals sign comprehensive confidentiality agreements that restrict sharing work-related information. While Mercor stated to The Wall Street Journal that it "does not buy intellectual property," messages from the company to employers reportedly used the phrase "looking to purchase," highlighting a discrepancy in their public and private messaging. This distinction matters because contractors who attempt to sell protected materials risk severe legal repercussions from their former employers.The compromise highlights a growing vulnerability in the AI ecosystem: the security of the tools and datasets used to build and train models. Regulators will likely examine how existing data protection frameworks address supply chain attacks, potentially leading to new compliance requirements for companies handling sensitive AI training data. For contractors, the breach underscores the inherent risks associated with providing personal and professional data to AI training platforms.