This development arrives as users report fluctuating performance from leading AI models. For instance, Anthropic acknowledged that changes made in March and April 2024 led to lower-quality responses from Claude Code, the Claude Agent SDK, and Claude Cowork. Specifically, on March 4, 2024, Anthropic adjusted Claude Code's default reasoning effort level from high to medium, followed by a system prompt revision on April 16 aimed at reducing verbosity, according to The Register.

Why Do AI Agents Struggle with Research?

Most coding agents are adept at producing functional code, yet they frequently fall short when confronted with the nuanced demands of AI research, model training, and infrastructure deployment. They often lack built-in knowledge of specific frameworks, optimal configurations, or streamlined workflows essential for advanced tasks. This deficiency creates a significant execution gap between code generation and actual AI innovation.

The head of product for Claude Code and Cowork at Anthropic, Cat Wu, highlighted the prevalent "fear of missing out" (FOMO) and stress among users due to the relentless pace of AI releases, as reported by Business Insider. This constant pressure to keep up with the latest advancements further underscores the need for tools that can quickly integrate and leverage new capabilities. Furthermore, companies are grappling with the economics of AI automation; some are spending more on AI agents than they would on human workers, with token costs becoming a significant concern, according to Axios.

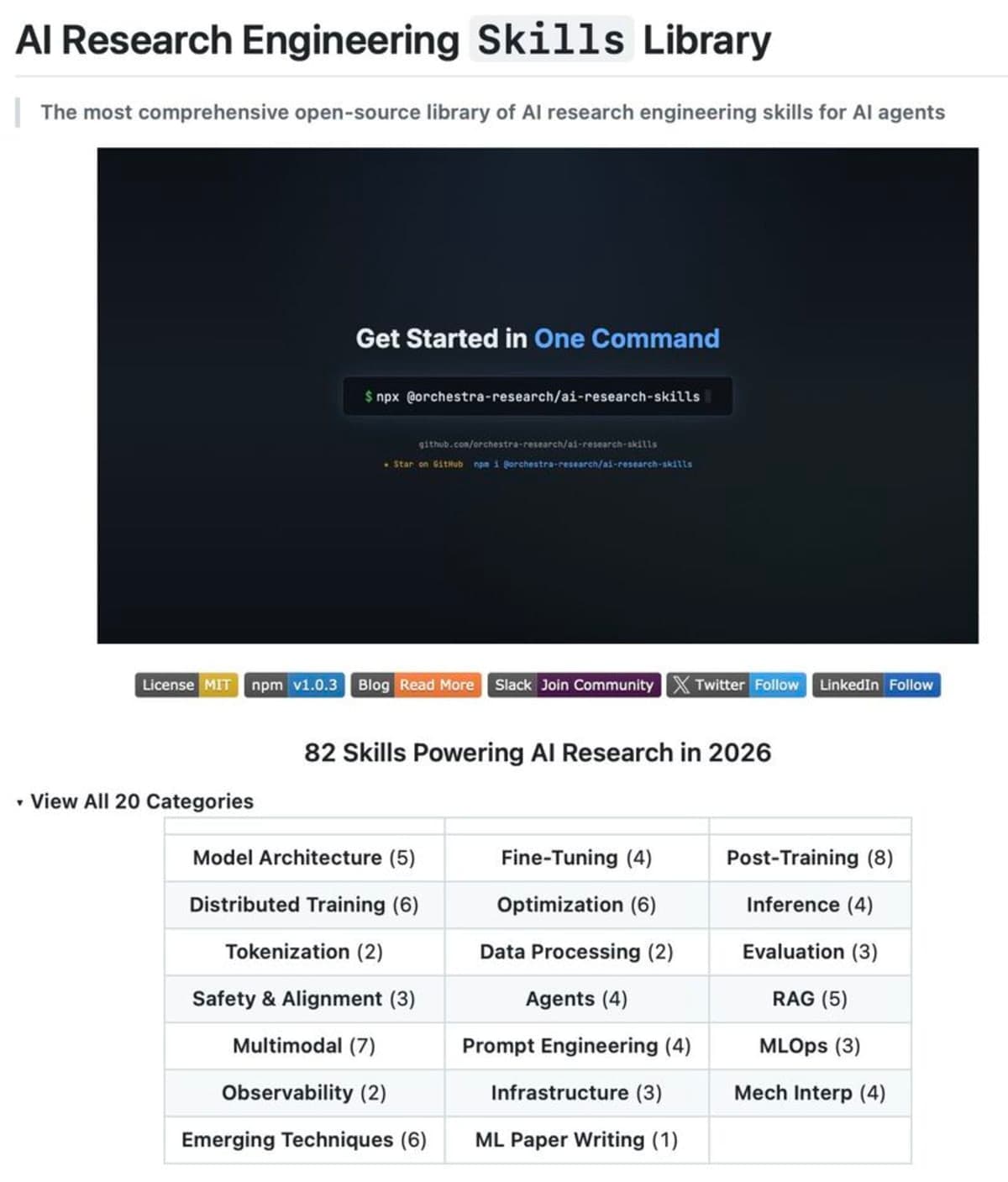

How Will AI Research Skills Empower Developers?

AI Research Skills aims to fill this knowledge void by providing agents with structured, production-ready guides for over 20 categories of AI research. These "skills" cover a vast array of tools and frameworks, from fine-tuning models with Axolotl and LLaMA-Factory to distributed training using DeepSpeed and Megatron-Core. The library also includes expertise in inference with vLLM, retrieval-augmented generation (RAG) using Chroma and Pinecone, and even advanced safety protocols like Constitutional AI.

The library's design ensures compatibility with multiple coding agents, including Claude Code, Codex, Cursor, Gemini CLI, and Qwen Code. This broad support means developers can integrate these specialized research capabilities into their existing agent workflows with minimal effort, often through a single installation command, as noted by Sumanth P. This approach allows agents to access expert-level knowledge instantly, accelerating experimentation and bridging the gap between theoretical understanding and practical application.

Most “coding agents” plateau at syntax and scaffolding, what you’re really shipping here is a modular prior over MLOps, training, and inference workflows...

— Hans-Peter Nowak, AI Professional

This framework offers a critical advantage for teams looking to accelerate their AI development without requiring agents to "learn" complex methodologies from scratch. By embedding expert knowledge directly, AI Research Skills helps agents navigate the intricate landscape of modern AI, allowing researchers to focus on novel approaches rather than boilerplate setup.